gap analysis

What is gap analysis?

A gap analysis is a method of assessing the performance of a business unit to determine whether business requirements or objectives are being met and, if not, what steps should be taken to meet them.

A gap analysis may also be referred to as a needs analysis, needs assessment or need-gap analysis.

The "gap" in the gap analysis process refers to the space between "where we are" as a part of the business (the present state) and "where we want to be" (the target state or desired state).

Gap analysis applications

In information technology, gap analysis reports often are used by project managers and process improvement teams as the starting point for an action plan to produce operational improvement. The gap analysis also helps in benchmarking actual business performance so it can be measured against optimal performance levels.

Performance gaps can be measured across multiple areas of the business, including customer satisfaction, revenue generation, productivity and supply chain cost.

Small businesses, in particular, can benefit from performing gap analyses when they're in the process of figuring out how to allocate resources.

In software development, gap analysis tools can document which services or Functions have been accidentally left out; which have been deliberately eliminated; and which still need to be developed.

In compliance initiatives, a gap analysis can compare what is required by certain regulations with what currently is being done to abide by them.

In human resources (HR), a gap analysis can be done to examine which skills are present in the workforce and what additional skills are needed to improve the organization's competitiveness or efficiency.

How to conduct a gap analysis

The first step in conducting a gap analysis is to establish specific target objectives by looking at the company's mission statement, strategic business goals and improvement objectives.

The next step is to analyze current processes by collecting relevant data on performance levels and how resources are presently allocated to these processes. This data can be collected from a variety of sources depending on what is being analyzed. For example, it may involve looking at documentation, measuring key performance indicators (KPIs) or other success metrics, conducting stakeholder interviews, brainstorming and observing project activities.

After a company compares its target goals against its current state, it can then draw up a comprehensive plan. Such a plan outlines a step-by-step process to fill the gap between its current and future states, and to reach its target objectives. This is often referred to as strategic planning.

What's in a gap analysis template?

While gap analysis methodologies can be either concrete or conceptual, gap analysis templates often have the following fundamental components in common.

The current state

A gap analysis template starts off with a column that might be labeled "Current State." It lists the processes, workflows and characteristics an organization seeks to improve, using factual and specific terms.

Areas of focus can be broad, targeting the entire business; or the focus may be narrow, concentrating on a specific business process. The choice depends on the company's target objectives.

The analysis of these focus areas can be either quantitative, such as looking at the number of customer calls answered within a certain time period; or qualitative, such as examining the state of diversity in the workplace.

The future state

The gap analysis report should also include a column labeled "Future State," which outlines the target condition the company wants to achieve.

Like the current state, this section can be drafted in concrete, quantifiable terms, such as aiming to increase the number of fielded customer calls by a certain percentage within a specific time period. Or it may be worded in general terms, such as working toward a more inclusive office culture.

Gap description

This column should first identify whether a gap actually exists between a company's current and future state. If so, the gap description should outline what constitutes the gap and the root causes that contribute to it.

This column lists those reasons in objective, clear and specific terms. Like the state descriptions, these components can be quantifiable or qualitative. They might cite factors such as the lack of diversity programs or the difference between the number of currently fielded calls and the target number of fielded calls.

Next steps and proposals

This final column of a gap analysis report should list all the possible solutions that can be implemented to fill the gap between the current and future states.

These objectives must be specific, directly speak to the factors listed in the gap description and be put in active and compelling terms. They should include clear objectives and a time frame for achieving them.

Some examples of the next steps include hiring a certain number of additional employees to field customer calls, instituting call volume reporting and launching specific office diversity programs and resources.

Gap analysis tools and examples

There are a variety of gap analysis tools and methodologies on the market, and the particular tool a company uses depends on its target objectives. The following are some common gap analysis methods:

McKinsey 7-S Framework

This gap analysis tool, introduced by consulting firm McKinsey & Co., is used to determine specific aspects of a company that are meeting expectations. An analyst using the 7-S model examines the characteristics of a business through the lens of seven people-centric groupings:

- strategy

- structure

- systems

- staff

- style

- skills

- shared values

The analyst fills in the current and future state for each category, which would then highlight where the gaps exist. The company can then implement a targeted solution to bridge that gap.

SWOT analysis

SWOT, which stands for strengths, weaknesses, opportunities and threats, is a gap analysis strategy used to identify the internal and external factors that drive the effectiveness and success of a product, project or person.

Once these factors are determined, the company can determine the best solution by playing to its strengths and allocating resources accordingly, while avoiding potential threats.

Nadler-Tushman model

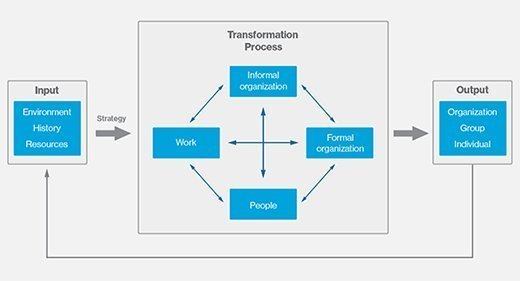

The Nadler-Tushman organizational congruence model, named after Columbia University professors David A. Nadler and Michael L. Tushman, examines how business processes work together and how gaps affect the operational efficiency of the organization as a whole.

The model helps to identify these operational gaps by analyzing the company's operational system as one that transforms inputs into outputs. It divides the business processes into three groups: input, transformation and output. Input includes the operational environment, tangible and intangible resources used and the company culture.

Transformation encompasses the existing systems, people and project activities currently in place that convert input into output. Outputs can take place at a system, group or individual level.

The Nadler-Tushman model puts a spotlight on how inadequate inputs and transformation functions that fail to work together cohesively can lead to gaps. It also focuses on how gaps in the outputs can point to problems in the inputs and transformation functions.

This model highlights how the various components fit together or are congruent. The more congruent these parts are, the better a company performs. The Nadler-Tushman model is a dynamic one that changes over time.