TechTarget News

News from TechTarget's global network of independent journalists. Stay current with the latest news stories. Browse thousands of articles covering hundreds of focused tech and business topics available on TechTarget's platform.Latest News

-

26 Apr 2024

Alphabet earnings show drive to boost Google Cloud and AI adoption

By Cliff SaranThe parent company of Google has increased artificial intelligence R&D spending and raised cloud sales commissions to boost adoption of Google Cloud

-

26 Apr 2024

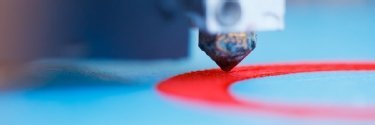

INDO-MIM taps 3D printing in precision manufacturing

By Kavitha SrinivasaThe precision metal parts manufacturer is using HP’s Metal Jet S100 printers to produce components using metal powder for the automotive, healthcare and other industries

-

25 Apr 2024

Rubrik IPO to grow platform, reach

By Tim McCarthyRubrik goes public, becoming the first data backup vendor to do so in years. It plans to expand its security cloud software and customer base with an influx of funding.

- Latest news from around the world

All News from the past 365 days

-

25 Apr 2024

Salesforce Financial Services Cloud gets GenAI for banking

By Don FluckingerIf Salesforce can talk the highly conservative, highly skeptical and highly regulated financial industry into adopting GenAI, the company could win over a lot more customers.

-

25 Apr 2024

Dymium scares ransomware attacks with honeypot specters

By Tim McCarthyDymium, a security startup that recently emerged from stealth, offers ransomware defense for data stores with a network of honeypot traps for spoofing attackers.

-

25 Apr 2024

Progress being made on gender diversity in cyber

By Alex ScroxtonWomen make up a higher percentage of new entrants to the cyber security profession, particularly among younger age groups, and are increasingly taking up leadership positions and hiring roles, but challenges still persist

-

25 Apr 2024

More evidence emerges that Post Office executive misled High Court judge

By Karl FlindersEvidence in the questioning of 35-year Post Office veteran reveals the lengths the Post Office went to in hiding computer system vulnerabilities

-

25 Apr 2024

Cisco zero-day flaws in ASA, FTD software under attack

By Alexander CulafiCisco revealed that a nation-state threat campaign dubbed 'ArcaneDoor' exploited two zero-day vulnerabilities in its Adaptive Security Appliance and Firepower Threat Defense products.

-

25 Apr 2024

UK gigabit maintains steady progress

By Joe O’HalloranLatest study of UK communications market from national regulator finds access to gigabit broadband and 5G mobile coverage has risen over past 12 months

-

25 Apr 2024

Executive interview: Salesforce AI head discusses dynamic flowcharts

By Cliff SaranBusiness workflows can usually be mapped onto flowcharts which depict the set of actions an IT system takes, but large language models can make this dynamic, according to Jayesh Govindarajan, senior vice-president of AI at Salesforce

-

25 Apr 2024

Oracle expands generative AI across its CX applications

By Don FluckingerOracle beefs up its Fusion Cloud Customer Experience applications with GenAI tools for users of its sales, field service, marketing and contact center.

-

25 Apr 2024

Meta chief lays out long-term AI plan

By Cliff SaranDuring the company’s first quarter earnings call, Mark Zuckerberg and chief financial officer Susan Li discussed Meta’s long-term bet on AI

-

25 Apr 2024

Zero trust is a strategy, not a technology

By Aaron TanZero-trust security should be seen as a strategy to protect high-value assets and is not tied to a specific technology or product, says the model’s creator John Kindervag

-

25 Apr 2024

Consolidation and growth are key MSP market trends

By Simon QuickeMarket analysis from tech investment player reveals the factors underpinning continued growth on both sides of the Atlantic

-

25 Apr 2024

Extreme Connect 2024: AI in networks to live or die by trust

By Joe O’HalloranCloud networking provider unveils hub for research, development and innovation in networking previews, tapping AI to offer a new way to design, optimise and deploy networks

-

24 Apr 2024

Lenovo, AMD broaden AI options for customers

By Adam ArmstrongLenovo is expanding its partnership with AMD to bring more options for servers and HCI devices aimed at AI. It also launched an AI advisory and professional services offering for customers.

-

24 Apr 2024

Mandatory MFA pays off for GitHub and OSS community

By Alex ScroxtonMandating multifactor authentication for select developers has been a huge success for GitHub, the platform reports, and now it wants to go further

-

24 Apr 2024

AtScale adds semantic layer support for AI, GenAI models

By Eric AvidonThe vendor's new platform update centers around decision-making flexibility, collaboration and community, and includes a metadata hub along with support for advanced applications.

-

24 Apr 2024

Critical CrushFTP zero-day vulnerability under attack

By Arielle WaldmanWhile a patch is now available, a critical CrushFTP vulnerability came under attack as a zero-day and could allow attackers to exfiltrate all files on the server.

-

24 Apr 2024

Coalition: Insurance claims for Cisco ASA users spiked in 2023

By Arielle WaldmanCoalition urged enterprises to be cautious when using Cisco and Fortinet network boundary devices as attackers can leverage the attack vectors to gain initial access.

-

24 Apr 2024

TD Synnex launches group to focus on AI

By Billy MacInnesDistributor forms group to bring together businesses and individuals with different skills and resources to maximise benefits of artificial intelligence

-

24 Apr 2024

GitHub vulnerability leaks sensitive security reports

By Arielle WaldmanThe vulnerability is triggered when GitHub users correct code or other mistakes they discover on repositories. But GitHub does not believe it warrants a fix.

-

24 Apr 2024

Cyber training leader KnowBe4 to buy email security firm Egress

By Alex ScroxtonSecurity awareness training and phishing simulation specialist KnowBe4 is to buy email security expert Egress

-

24 Apr 2024

Experts: IBM buy could change HashiCorp open source equation

By Beth PariseauIBM will buy HashiCorp for $6.5 billion, prompting speculation that being brought under the same roof as Red Hat could alter Hashi's open source trajectory.

-

24 Apr 2024

TikTok ban sails through US Senate

By Alex ScroxtonA law that will ban TikTok in the US unless its owner sells up pronto passed the US Senate by a landslide majority after being included in a package of military aid

-

24 Apr 2024

AI firm saves a million in shift to Pure FlashBlade shared storage

By Antony AdsheadAI consultancy Crater Labs spent vast amounts of time managing server-attached drives to ensure GPUs were saturated. A shift to all-flash Pure Storage slashed that to almost zero

-

24 Apr 2024

Education will be key to good AI regulation: A view from the USA

By Alex ScroxtonComputer Weekly sat down with Salesforce’s vice-president of federal government affairs, Hugh Gamble, to find out how the US is forging a path towards AI regulation, and how things look from Capitol Hill

-

24 Apr 2024

HubSpot embraces GenAI with Service Hub updates and more

By Don FluckingerA reimagined, generative AI-infused Content Hub leads the way for a passel of HubSpot updates for users of its sales, service, marketing and digital payment platforms.

-

24 Apr 2024

Snowflake targets enterprise AI with launch of Arctic LLM

By Eric AvidonThe data cloud vendor's open source LLM was designed to excel at business-specific tasks, such as generating code and following instructions, to enable enterprise-grade development.

-

24 Apr 2024

SAP earnings rise, but no support extension

By Cliff SaranSupport for SAP ECC is due to end in 2027. The company hopes customers will choose to buy into its business AI portfolio

-

24 Apr 2024

ITV News tech failures nearly caused ‘holes’ in live broadcasts

By Clare McDonaldSoftware purchased by ITN to be used to create bulletins and other content for ITV News is still experiencing problems almost a year after its introduction

-

24 Apr 2024

Lords debate amendment to law on use of computer evidence in light of Post Office scandal

By Karl FlindersPeers to debate amending the law on the use of computer evidence in court, which is partly to blame for the wrongful prosecutions of hundreds of former subpostmasters

-

24 Apr 2024

HPE looks to go beyond standards requirements with Wi-Fi 7 access points

By Joe O’HalloranHPE announces Wi-Fi 7 wireless access points to provide managed ‘comprehensive’ edge IT solution to provide extended user and IoT connectivity, in-line processing, enhanced security and maximised performance

-

24 Apr 2024

UK altnets claim to outpace Openreach in UK fibre

By Joe O’HalloranResearch from Independent Networks Cooperation Association finds that despite challenges, alternative broadband providers enjoyed robust growth in 2023

-

24 Apr 2024

Mitel sets out UC strategy

By Simon QuickeComms player shares three-pronged approach with partners and customers

-

23 Apr 2024

Splunk-Cribl lawsuit yields mixed result for both companies

By Beth PariseauCribl did infringe on Splunk's copyright, a California jury found, but awarded damages of only $1. Both sides declared victory, and Splunk vowed to seek injunctive relief.

-

23 Apr 2024

SAP earnings for Q1 indicate strong cloud growth

By Jim O'DonnellSAP's cloud revenue for the first quarter of 2024 indicates healthy growth and sets the stage as customers plan cloud migrations and implementations of business AI functionalities.

-

23 Apr 2024

Veeam acquires Coveware for incident response capabilities

By Tim McCarthyCoveware will remain operationally independent with its cyberincident capabilities and ransomware research complementing the data backup vendor's recovery offerings.

-

23 Apr 2024

Extreme Connect 2024: Wi-Fi 6E to drive connectivity revolution in infinite enterprise

By Joe O’HalloranExtreme Networks kicks off annual conference with a launch it says will revolutionise outdoor connectivity through Wi-Fi 6E certification, with first significant customer deployments by leading entertainment company, major league baseball sports team and university

-

23 Apr 2024

FTC bans noncompete agreements in split vote

By Makenzie HollandNow that the FTC has issued its final rule banning noncompete clauses, it's likely to face a bevy of legal challenges.

-

23 Apr 2024

Gartner's IT services forecast calls for consulting uptick

By John MooreIT service providers could benefit from a less-constrained tech purchasing climate as enterprises seek to bolster in-house skills with consulting services.

-

23 Apr 2024

Cohesity adds confidential computing to FortKnox

By Tim McCarthyCohesity is partnering with Intel to bring confidential computing technology to its FortKnox vault service -- a welcome, if limited, security addition, according to experts.

-

23 Apr 2024

U.S. cracks down on commercial spyware with visa restrictions

By Alexander CulafiThe move marks the latest effort by the U.S. government to curb the spread of commercial spyware, which has been used to target journalists, politicians and human rights activists.

-

23 Apr 2024

GooseEgg proves golden for Fancy Bear, says Microsoft

By Alex ScroxtonMicrosoft’s threat researchers have uncovered GooseEgg, a never-before-seen tool being used by Forest Blizzard, or Fancy Bear, in conjunction with vulnerabilities in Windows Print Spooler

-

23 Apr 2024

Enterprise AI: Free, premium or a bolt-on?

By Cliff SaranSaaS providers will have to offer AI in their product mix. But they need to make a huge upfront investment in AI infrastructure, which impacts revenue

-

23 Apr 2024

Post Office boss used husband’s descriptions in 'Orwellian' ploy to downplay Horizon problems

By Karl FlindersPost Office CEO sought advice from husband on what words to use in reports to downplay known errors in software, inquiry told.

-

23 Apr 2024

ONC releases Common Agreement Version 2.0 for TEFCA

By Hannah NelsonONC has released the TEFCA Common Agreement Version 2.0, as well as Participant and Subparticipant Terms of Participation, to further health data interoperability.

-

23 Apr 2024

AWS boosts Amazon Bedrock GenAI platform, upgrades Titan LLM

By Shaun SutnerThe cloud giant buttressed its GenAI platform with features to import, select and build safety guardrails for third-party LLMs from Meta, Cohere, Mistral and others more easily.

-

23 Apr 2024

Mandiant: Attacker dwell time down, ransomware up in 2023

By Rob WrightMandiant's 'M-Trends' 2024 report offered positive signs for global cybersecurity but warned that threat actors are shifting to zero-day exploitation and evasion techniques.

-

23 Apr 2024

Nokia boosts Industry 4.0 with MX Grid, Visual Position and Object Detection

By Joe O’HalloranComms tech provider makes series of announcements to enable more effective, responsive and agile decision-making for Industry 4.0 segments by processing, and unveils visual position and object detection technology to track industrial assets via camera video feeds

-

23 Apr 2024

Expert investigating Capture system refuses to meet ‘untrustworthy’ Post Office

By Karl FlindersA former Post Office executive has refused to meet his past employer to discuss the controversial Capture system

-

23 Apr 2024

Vodafone Business aims to help SMEs boost productivity and security

By Joe O’HalloranFollowing research showing how small and medium-sized businesses could increase profits and efficiency by making better use of digital tools, UK comms provider unveils campaign to help SMEs source digital tools to boost productivity and security

-

23 Apr 2024

Lords split over UK government approach to autonomous weapons

By Sebastian Klovig SkeltonDuring a debate on autonomous weapons systems, Lords expressed mixed opinions towards the UK government’s current position, including its reluctance to adopt a working definition and commit to international legal instruments controlling their use

-

22 Apr 2024

Mitre breached by nation-state threat actor via Ivanti flaws

By Alexander CulafiAn unnamed nation-state threat actor breached Mitre through two Ivanti Connect Secure zero-day vulnerabilities, CVE-2023-46805 and CVE-2024-21887, disclosed earlier this year.

-

22 Apr 2024

SAP Emarsys launches Product Finder AI shopping tool

By Don FluckingerSAP Emarsys moves deeper into generative AI with Product Finder, a product recommendation tool that promises to improve e-commerce and marketing message relevancy.

-

22 Apr 2024

Fujitsu to cut UK jobs as Post Office scandal fallout hits sales

By Karl FlindersJapanese supplier’s role in the Post Office Horizon scandal is beginning to hurt its UK business, with job cuts announced

-

22 Apr 2024

Former Sellafield consultant claims the nuclear complex tampered with evidence

By Tommy GreeneWhistleblower Alison McDermott claims former employer Sellafield tampered with metadata in letters used in evidence during an employment tribunal

-

22 Apr 2024

Fujifilm plans to ‘make tape easy’ with Kangaroo SME appliance

By Antony AdsheadFujifilm to add 100TB SME-focused Kangaroo tape infrastructure in a box to existing 1PB offer, as energy efficiency and security of tape make it alluring to customers

-

22 Apr 2024

Government provides funding to help innovators navigate regs

By Cliff SaranAI and Digital Hub, backed by almost £2m in funding, will coordinate regulatory advice across CMA, FCA, ICO and Ofcom

-

22 Apr 2024

NCSC announces PwC’s Richard Horne as CEO

By Sebastian Klovig SkeltonFormer PwC and Barclays cyber chief Richard Horne set to join UK’s National Cyber Security Centre as CEO

-

22 Apr 2024

Interview: IoT enables Dutch-French company to provide bicycle and scooter rental

By Pat BransDott’s connected system and mobile app allow users to hire vehicles and ensure safe and compliant rides in 40 cities across Europe and Israel

-

22 Apr 2024

Digital Edge punching above its weight in Asia datacentre market

By Aaron TanFast-growing datacentre provider Digital Edge is eyeing business from hyperscalers and counting on its strengths in datacentre operations and local partnerships to stand out from rivals

-

22 Apr 2024

Post Office lawyer was a jack of all trades, but failed his own

By Karl FlindersPost Office IT scandal inquiry hears how a lawyer was at the centre of the Post Office’s attempts to prevent problems with its IT system becoming public knowledge

-

22 Apr 2024

IT leaders hiring CISOs aplenty, but don’t fully understand the role

By Alex ScroxtonMost businesses now have a CISO, but perceptions of what CISOs are supposed to do, and confusion over the value they offer, may be holding back harmonious relations, according to a report

-

21 Apr 2024

Crime agency criticises Meta as European police chiefs call for curbs on end-to-end encryption

By Bill GoodwinLaw enforcement agencies step up demands for ‘lawful access’ to encrypted communications

-

19 Apr 2024

Cisco charts new security terrain with Hypershield

By Antone GonsalvesInitially, Hypershield protects software, VMs and containerized applications running on Linux. Cisco's ambition is to eventually broaden its reach.

-

19 Apr 2024

Businesses need to prepare for SEC climate rules, EU's CSRD

By Makenzie HollandWhile the SEC's new climate rules and the EU's CSRD are both facing delays, businesses still need to identify methods for collecting and assessing climate data.

-

19 Apr 2024

Report reveals Northern Ireland police put up to 18 journalists and lawyers under surveillance

By Bill GoodwinDisclosures that the Police Service of Northern Ireland obtained phone communications data from journalists and lawyers leads to renewed calls for inquiry

-

19 Apr 2024

Nexfibre reaches million premises ready for service benchmark

By Joe O’HalloranMonths after commitment to billion-pound investment in broadband infrastructure across the course of 2024, UK wholesale provider claims massive milestone in plan to deliver full-fibre gigabit network to five million premises by 2026

-

19 Apr 2024

OpenTofu forges on with beta feature that drew HashiCorp ire

By Beth PariseauDefying a HashiCorp cease and desist, OpenTofu 1.7 beta ships with the removed blocks feature and client-side state encryption support long sought by the Terraform community.

-

19 Apr 2024

Sonatus opens software-defined vehicle R&D, engineering centre in Dublin

By Joe O’HalloranSoftware-defined vehicle partner establishes new design centre to take advantage of highly skilled pool of technical and engineering talent, and enable closer collaboration with customers and partners in the UK and EU

-

19 Apr 2024

Businesses confront reality of generative AI in finance

By Lev CraigAs large language models move from pilot projects to full-scale deployment in finance, the industry is facing a mixture of compliance and technological challenges in 2024.

-

19 Apr 2024

CISA: Akira ransomware extorted $42M from 250+ victims

By Alexander CulafiThe Akira ransomware gang, which utilizes sophisticated hybrid encryption techniques and multiple ransomware variants, targeted vulnerable Cisco VPNs in a campaign last year.

-

19 Apr 2024

Decade-long IR35 dispute between IT contractor and HMRC prompts calls for off-payroll revamp

By Caroline DonnellyAn IT contractor’s business is on the brink of insolvency after a decade-long IR35 dispute with HMRC

-

19 Apr 2024

Unisys reveals no link to development of controversial Post Office software

By Karl FlindersIT supplier finds no evidence that it was involved in the development of controversial Post Office software

-

19 Apr 2024

Tech companies operating with opacity in Israel-Palestine

By Sebastian Klovig SkeltonTech firms operating in Occupied Palestinian Territories and Israel are falling “woefully short” of their human rights responsibilities amid escalating devastation in Gaza, says Business & Human Rights Resource Centre

-

19 Apr 2024

Ciena optical tech makes light waves in Canada, Indonesia

By Joe O’HalloranTelcos from Europe and Asia deploy optical comms technology provider’s solutions to adapt to user and performance demand, reaching higher bandwidth and reducing energy requirements

-

19 Apr 2024

CEST: Putting HMRC’s IR35 status checker under the microscope

By Caroline DonnellySince its launch in March 2017, HMRC's online IR35 status checker tool has come under fierce criticism and scrutiny, only exacerbated in recent weeks by the disclosure it has not been updated in five years

-

19 Apr 2024

How Manipal Hospitals is driving tech innovations in healthcare

By Aaron TanManipal Hospitals’ video consultation services and a nurse rostering app are among the tech innovations it is spurring to improve patient care and ward operations

-

18 Apr 2024

Meta releases two Llama 3 models, more to come

By Esther AjaoThe social media giant's new open source LLM is telling of the challenges in the open source market and its future ambitions involving multimodal and multilingual capabilities.

-

18 Apr 2024

GitLab Duo plans harness growing interest in platform AI

By Beth PariseauGitLab's next release will tie its Duo AI tools to the full DevSecOps pipeline in a bid to capitalize on increased interest in AI automation among platform engineers.

-

18 Apr 2024

Cisco discloses high-severity vulnerability, PoC available

By Arielle WaldmanThe security vendor released fixes for a vulnerability that affects Cisco Integrated Management Controller, which is used by devices including routers and servers.

-

18 Apr 2024

Use of AI in business drives increase in consulting services

By John MooreThe slice of businesses using external providers for AI uptake is set to more than double, according to U.S. Census survey data. Industry leaders say that tracks with their outlook.

-

18 Apr 2024

Cyber-resilient storage a final defense against ransomware

By Tim McCarthyFeatures to enhance storage cyber resiliency should be table stakes for buyers, experts say. But enhancements are needed to stave off ransomware attacks.

-

18 Apr 2024

Hammerspace reaches everywhere with erasure coding

By Adam ArmstrongHammerspace has sped up its global file system everywhere it touches, even on white box hardware, with the addition of erasure coding technology it gained through an acquisition.

-

18 Apr 2024

International police operation infiltrates LabHost phishing website used by thousands of criminals

By Bill GoodwinThe Metropolitan Police working with international police forces have shut down LabHost, a phishing-as-a-service website that has claimed 70,000 victims in the UK

-

18 Apr 2024

CrowdStrike extends cloud security to Mission Cloud customers

By Alexander CulafiCrowdStrike Falcon Cloud Security and Falcon Complete Cloud Detection and Response (CDR) will be made available through the Mission Cloud One AWS MSP platform.

-

18 Apr 2024

CSA warns of emerging security risks with cloud and AI

By Aaron TanFew users appreciate the security risks of cloud and have the expertise to implement the complex security controls, says CSA chief executive David Koh

-

18 Apr 2024

TUC publishes legislative proposal to protect workers from AI

By Sebastian Klovig SkeltonProposed bill for regulating artificial intelligence in the UK seeks to translate well-meaning principles and values into concrete rights and obligations that protect workers from systems that make ‘high-risk’ decisions about them

-

18 Apr 2024

Lords to challenge controversial DWP benefits bank account surveillance powers

By Bill GoodwinMembers of the House of Lords are pressing for amendments to the Data Protection and Digital Information Bill following concerns over government powers to monitor the bank accounts of people receiving benefits

-

18 Apr 2024

Gov.uk One Login accounts on the rise

By Lis EvenstadSince August 2023, more than 1.8 million people verified their identity using the Gov.uk One Login app, while face-to-face verifications have also increased

-

18 Apr 2024

IT expert who helped expose Post Office scandal offers to investigate second controversial system

By Karl FlindersIT expert Jason Coyne, who played a critical part in exposing the Post Office Horizon scandal, said he would examine the Capture System

-

17 Apr 2024

Certinia adds AI capabilities to PSA cloud suite

By Jim O'DonnellThe PSA vendor adds AI functionality to its professional services cloud applications that are designed to help services firms manage customers, personnel, resources and projects.

-

17 Apr 2024

DHS funding breathes fresh life into SBOMs

By Beth PariseauProtobom, now an OpenSSF sandbox project, is the first of multiple software supply chain security efforts funded under the Silicon Valley Innovation Program.

-

17 Apr 2024

AI-fueled efficiency a focus for SAS analytics platform

By Eric AvidonThe vendor's latest product development plans include an AI assistant and prebuilt AI models that enable workers to be more productive as they explore and analyze data.

-

17 Apr 2024

Lawmakers concerned about deepfake AI's election impact

By Makenzie HollandLawmakers want Congress to intervene and tackle AI manipulations that could affect U.S. elections. However, legislation has yet to advance to the House or Senate floor.

-

17 Apr 2024

Looking closer at Microsoft's investment in UAE AI vendor G42

By Esther AjaoThe tech giant will own a minor stake, and G42's LLM will be on Azure. The move helps the cloud provider expand globally and helps the U.S. court the UAE away from China.

-

17 Apr 2024

VMware’s APAC customers weigh in on licensing changes

By Aaron TanVMware customers in the region are concerned about higher costs even as they see the benefits of subscription-based pricing and product bundling in the longer term

-

17 Apr 2024

Westcoast picks up Spire

By Simon QuickeDistributor Westcoast adds components specialist Spire to bolster the portfolio and add more depth to the Group

-

17 Apr 2024

Mandiant formally pins Sandworm cyber attacks on APT44 group

By Alex ScroxtonMandiant has formally attributed a long-running campaign of cyber attacks by a Russian state actor known as Sandworm to a newly designated advanced persistent threat group to be called APT44

-

17 Apr 2024

Wireless Logic enters Starlink orbit for IoT deployments

By Joe O’HalloranGlobal internet of things connectivity platform provider joins forces with satellite broadband constellation to offer fully managed service combining cellular and satellite connectivity to expand options for IoT and enterprises worldwide

-

17 Apr 2024

CIO interview: Attiq Qureshi, chief digital information officer, Manchester United

By Bryan GlickFor any IT professional who is also a football fan, there can’t be many more appealing jobs than CIO of a Premier League club – we find out what it’s like to be the IT chief at Manchester United

-

17 Apr 2024

BMC boosts network observability with Netreo acquisition

By Joe O’HalloranAutonomous digital enterprise operations technology firm announces takeover of provider of smart and secure IT network and application observability solutions