cybersecurity

What is cybersecurity?

Cybersecurity is the practice of protecting internet-connected systems such as hardware, software and data from cyberthreats. It's used by individuals and enterprises to protect against unauthorized access to data centers and other computerized systems.

An effective cybersecurity strategy can provide a strong security posture against malicious attacks designed to access, alter, delete, destroy or extort an organization's or user's systems and sensitive data. Cybersecurity is also instrumental in preventing attacks designed to disable or disrupt a system's or device's operations.

An ideal cybersecurity approach should have multiple layers of protection across any potential access point or attack surface. This includes a protective layer for data, software, hardware and connected networks. In addition, all employees within an organization who have access to any of these endpoints should be trained on the proper compliance and security processes. Organizations also use tools such as unified threat management systems as another layer of protection against threats. These tools can detect, isolate and remediate potential threats and notify users if additional action is needed.

Cyberattacks can disrupt or immobilize their victims through various means, so creating a strong cybersecurity strategy is an integral part of any organization. Organizations should also have a disaster recovery plan in place so they can quickly recover in the event of a successful cyberattack.

Why is cybersecurity important?

With the number of users, devices and programs in the modern enterprise increasing along with the amount of data -- much of which is sensitive or confidential -- cybersecurity is more important than ever. But the volume and sophistication of cyberattackers and attack techniques compound the problem even further.

Without a proper cybersecurity strategy in place -- and staff properly trained on security best practices -- malicious actors can bring an organization's operations to a screeching halt.

What are the elements of cybersecurity and how does it work?

The cybersecurity field can be broken down into several different sections, the coordination of which within the organization is crucial to the success of a cybersecurity program. These sections include the following:

- Application security.

- Information or data security.

- Network security.

- Disaster recovery and business continuity planning.

- Operational security.

- Cloud security.

- Critical infrastructure security.

- Physical security.

- End-user education.

Maintaining cybersecurity in a constantly evolving threat landscape is a challenge for all organizations. Traditional reactive approaches, in which resources were put toward protecting systems against the biggest known threats while lesser-known threats were undefended, are no longer a sufficient tactic. To keep up with changing security risks, a more proactive and adaptive approach is necessary. Several key cybersecurity advisory organizations offer guidance. For example, the National Institute of Standards and Technology (NIST) recommends adopting continuous monitoring and real-time assessments as part of a risk assessment framework to defend against known and unknown threats.

What are the benefits of cybersecurity?

The benefits of implementing and maintaining cybersecurity practices include the following:

- Business protection against cyberattacks and data breaches.

- Protection of data and networks.

- Prevention of unauthorized user access.

- Improved recovery time after a breach.

- Protection for end users and endpoint devices.

- Regulatory compliance.

- Business continuity.

- Improved confidence in the company's reputation and trust for developers, partners, customers, stakeholders and employees.

What are the different types of cybersecurity threats?

Keeping up with new technologies, security trends and threat intelligence is a challenging task. It's necessary in order to protect information and other assets from cyberthreats, which take many forms. Types of cyberthreats include the following:

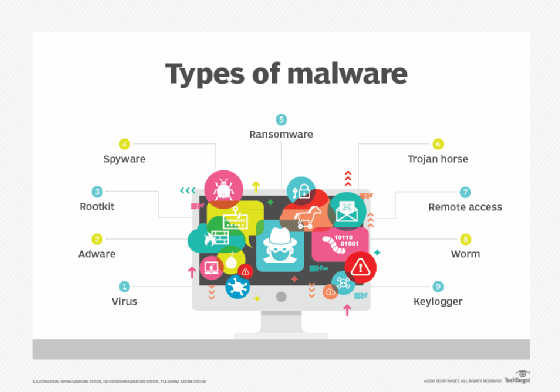

- Malware is a form of malicious software in which any file or program can be used to harm a user's computer. Different types of malware include worms, viruses, Trojans and spyware.

- Ransomware is a type of malware that involves an attacker locking the victim's computer system files -- typically through encryption -- and demanding a payment to decrypt and unlock them.

- Social engineering is an attack that relies on human interaction. It tricks users into breaking security procedures to gain sensitive information that's typically protected.

- Phishing is a form of social engineering in which fraudulent email or text messages that resemble those from reputable or known sources are sent. Often random attacks, the intent of phishing messages is to steal sensitive data, such as credit card or login information.

- Spear phishing is a type of phishing that has an intended target user, organization or business.

- Insider threats are security breaches or losses caused by humans -- for example, employees, contractors or customers. Insider threats can be malicious or negligent in nature.

- Distributed denial-of-service (DDoS) attacks are those in which multiple systems disrupt the traffic of a targeted system, such as a server, website or other network resource. By flooding the target with messages, connection requests or packets, DDoS attacks can slow the system or crash it, preventing legitimate traffic from using it.

- Advanced persistent threats (APT) is a prolonged targeted attack in which an attacker infiltrates a network and remains undetected for long periods of time. The goal of an APT is to steal data.

- Man-in-the-middle (MitM)) attacks are eavesdropping attacks that involve an attacker intercepting and relaying messages between two parties who believe they're communicating with each other.

- SQL injection is a technique that attackers use to gain access to a web application database by adding a string of malicious SQL code to a database query. A SQL injection provides access to sensitive data and enables the attackers to execute malicious SQL statements.

Other common types of attacks include botnets, drive-by-download attacks, exploit kits, malvertising, vishing, credential stuffing attacks, cross-site scripting attacks, keyloggers, worms and zero-day exploits.

What are the top cybersecurity challenges?

Cybersecurity is continually challenged by hackers, data loss, privacy, risk management and changing cybersecurity strategies. And the number of cyberattacks isn't expected to decrease anytime soon. Moreover, increased entry points for attacks, such as the internet of things and the growing attack surface, increase the need to secure networks and devices.

The following major challenges must be continuously addressed.

Evolving threats

One of the most problematic elements of cybersecurity is the evolving nature of security risks. As new technologies emerge -- and as technology is used in new or different ways -- new attack avenues are developed. Keeping up with these frequent changes and advances in attacks, as well as updating practices to protect against them, can be challenging. Issues include ensuring all elements of cybersecurity are continually updated to protect against potential vulnerabilities. This can be especially difficult for smaller organizations that don't have adequate staff or in-house resources.

Data deluge

Organizations can gather a lot of potential data on the people who use their services. With more data being collected comes the potential for a cybercriminal to steal personally identifiable information (PII). For example, an organization that stores PII in the cloud could be subject to a ransomware attack

Cybersecurity awareness training

Cybersecurity programs should also address end-user education. Employees can accidentally bring threats and vulnerabilities into the workplace on their laptops or mobile devices. Likewise, they might act imprudently -- for example, clicking links or downloading attachments from phishing emails.

Regular security awareness training can help employees do their part in keeping their company safe from cyberthreats.

Workforce shortage and skills gap

Another cybersecurity challenge is a shortage of qualified cybersecurity personnel. As the amount of data collected and used by businesses grows, the need for cybersecurity staff to analyze, manage and respond to incidents also increases. In 2023, cybersecurity association ISC2 estimated the workplace gap between needed cybersecurity jobs and security professionals at 4 million, a 12.6% increase over 2022.

Supply chain attacks and third-party risks

Organizations can do their best to maintain security, but if the partners, suppliers and third-party vendors that access their networks don't act securely, all that effort is for naught. Software- and hardware-based supply chain attacks are becoming increasingly difficult security challenges. Organizations must address third-party risk in the supply chain and reduce software supply issues, for example, by using software bills of materials.

Cybersecurity best practices

To minimize the chance of a cyberattack, it's important to implement and follow a set of best practices that includes the following:

- Keep software up to date. Be sure to keep all software, including antivirus software, up to date. This ensures attackers can't take advantage of known vulnerabilities that software companies have already patched.

- Change default usernames and passwords. Malicious actors might be able to easily guess default usernames and passwords on factory preset devices to gain access to a network.

- Use strong passwords. Employees should select passwords that use a combination of letters, numbers and symbols that will be difficult to hack using a brute-force attack or guessing. Employees should also change their passwords often.

- Use multifactor authentication (MFA). MFA requires at least two identity components to gain access, which minimizes the chances of a malicious actor gaining access to a device or system.

- Train employees on proper security awareness. This helps employees properly understand how seemingly harmless actions could leave a system vulnerable to attack. This should also include training on how to spot suspicious emails to avoid phishing attacks.

- Implement an identity and access management system (IAM). IAM defines the roles and access privileges for each user in an organization, as well as the conditions under which they can access certain data.

- Implement an attack surface management system. This process encompasses the continuous discovery, inventory, classification and monitoring of an organization's IT infrastructure. It ensures security covers all potentially exposed IT assets accessible from within an organization.

- Use a firewall. Firewalls restrict unnecessary outbound traffic, which helps prevent access to potentially malicious content.

- Implement a disaster recovery process. In the event of a successful cyberattack, a disaster recovery plan helps an organization maintain operations and restore mission-critical data.

How is automation used in cybersecurity?

Automation has become an integral component to keeping companies protected from the increasing number and sophistication of cyberthreats. Using artificial intelligence (AI) and machine learning in areas with high-volume data streams can help improve cybersecurity in the following three main categories:

- Threat detection. AI platforms can analyze data and recognize known threats, as well as predict novel threats that use newly discovered attack techniques that bypass traditional security.

- Threat response. AI platforms create and automatically enact security protections.

- Human augmentation. Security pros are often overloaded with alerts and repetitive tasks. AI can help eliminate alert fatigue by automatically triaging low-risk alarms and automating big data analysis and other repetitive tasks, freeing humans for more sophisticated tasks.

Other benefits of automation in cybersecurity include attack classification, malware classification, traffic analysis, compliance analysis and more.

Cybersecurity vendors and tools

Vendors in the cybersecurity field offer a variety of security products and services that fall into the following categories:

- IAM.

- Firewalls.

- Endpoint protection.

- Antimalware and antivirus.

- Intrusion prevention systems and detection systems.

- Data loss prevention.

- Endpoint detection and response.

- Security information and event management.

- Encryption.

- Vulnerability scanners.

- Virtual private networks.

- Cloud workload protection platform.

- Cloud access security broker.

Examples of cybersecurity vendors include the following:

- Check Point Software.

- Cisco.

- Code42 Software Inc.

- CrowdStrike.

- FireEye.

- Fortinet.

- IBM.

- Imperva.

- KnowBe4, Inc.

- McAfee.

- Microsoft.

- Palo Alto Networks.

- Rapid7.

- Splunk.

- Symantec by Broadcom.

- Trend Micro.

- Trustwave.

What are the career opportunities in cybersecurity?

As the cyberthreat landscape continues to grow and new threats emerge, organizations need individuals with cybersecurity awareness and hardware and software skills.

IT professionals and other computer specialists are needed in the following security roles:

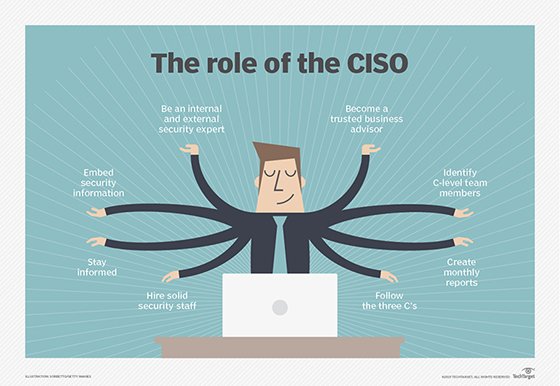

- Chief information security officer (CISO). A CISO is the person who implements the security program across the organization and oversees the IT security department's operations.

- Chief security officer (CSO). A CSO is the executive responsible for the physical and cybersecurity of a company.

- Computer forensics analysts. They investigate computers and digital devices involved in cybercrimes to prevent a cyberattack from happening again. Computer forensics analysts uncover how a threat actor gained access to a network, identifying security gaps. This position is also in charge of preparing evidence for legal purposes.

- Security engineers. These IT professionals protect company assets from threats with a focus on quality control within the IT infrastructure.

- Security architects. These people are responsible for planning, analyzing, designing, testing, maintaining and supporting an enterprise's critical infrastructure.

- Security analysts. These IT professionals plan security measures and controls, protect digital files, and conduct both internal and external security audits.

- Security software developers. These IT professionals develop software and ensure it's secured to help prevent potential attacks.

- Network security architects. Their responsibilities include defining network policies and procedures and configuring network security tools like antivirus and firewall configurations. Network security architects improve the security strength while maintaining network availability and performance.

- Penetration testers. These are ethical hackers who test the security of systems, networks and applications, seeking vulnerabilities that malicious actors could exploit.

- Threat hunters. These IT professionals are threat analysts who aim to uncover vulnerabilities and attacks and mitigate them before they compromise a business.

Other cybersecurity careers include security consultants, data protection officers, cloud security architects, security operations managers and analysts, security investigators, cryptographers and security administrators.

Entry-level cybersecurity positions typically require one to three years of experience and a bachelor's degree in business or liberal arts, as well as certifications such as CompTIA Security+. Jobs in this area include associate cybersecurity analysts and network security analyst positions, as well as cybersecurity risk and SOC analysts.

Mid-level positions typically require three to five years of experience. These positions typically include security engineers, security analysts and forensics analysts.

Senior-level positions typically require five to eight years of experience. They typically include positions such as senior cybersecurity risk analyst, principal application security engineer, penetration tester, threat hunter and cloud security analyst.

Higher-level positions generally require more than eight years of experience and typically encompass C-level positions.

Advancements in cybersecurity technology

As newer technologies evolve, they can be applied to cybersecurity to advance security practices. Some recent technology trends in cybersecurity include the following:

- Security automation through AI. While AI and machine learning can aid attackers, they can also be used to automate cybersecurity tasks. AI is useful for analyzing large data volumes to identify patterns and for making predictions on potential threats. AI tools can also suggest possible fixes for vulnerabilities and identify patterns of unusual behavior.

- Zero-trust architecture. Zero-trust principles assume that no users or devices should be considered trustworthy without verification. Implementing a zero-trust approach can reduce both the frequency and severity of cybersecurity incidents, along with other zero-trust benefits.

- Behavioral biometrics. This cybersecurity method uses machine learning to analyze user behavior. It can detect patterns in the way users interact with their devices to identify potential threats, such as if someone else has access to their account.

- Continued improvements in response capabilities. Organizations must be continually prepared to respond to large-scale ransomware attacks so they can properly respond to a threat without paying any ransom and without losing any critical data.

- Quantum computing. While this technology is still in its infancy and still has a long way to go before it sees use, quantum computing will have a large impact on cybersecurity practices -- introducing new concepts such as quantum cryptography.

Cybersecurity has many facets that require a keen and consistent eye for successful implementation. Improve your own cybersecurity implementation using these cybersecurity best practices and tips.